OpenAI fast-tracked a phone for 2027

Apple paid $250M for the Siri it never shipped, Raycast rebuilt from scratch, xAI launched Grok Build, AlphaEvolve hit TPU silicon, and Canvas leaked SIM students…

💬 Editor’s Note

For most of the last year, coding agents lived inside whatever IDE or terminal you were already using. This week three different teams broke that pattern. GitHub put Copilot in its own desktop app. xAI launched Grok Build as a standalone CLI. Raycast rebuilt itself from scratch and made AI a first-class surface instead of a plugin.

The other half of the week was hardware. OpenAI is reportedly fast-tracking a phone for early 2027, AlphaEvolve is now designing circuits inside Google’s next TPUs, and Apple wrote a $250 million check for the AI Siri it announced in 2024 and never shipped. The pattern feels new, but it’s the oldest one in tech. When the software gets good enough, everyone races for the chip and the surface it runs on.

📰 Top News

OpenAI is reportedly making a phone

Supply chain analyst Ming-Chi Kuo reported, via MacRumors, that OpenAI is fast-tracking a phone for mass production in early 2027. It runs on a customized MediaTek Dimensity 9600, with LPDDR6 memory, UFS 5.0 storage, and a dual-NPU architecture for running language and vision tasks at the same time. Kuo’s combined 2027 to 2028 shipment forecast is around 30 million units, which would put OpenAI’s first piece of hardware near a typical Samsung flagship.

The Jony Ive screenless gadget hasn’t been cancelled, but the company’s actual first device is now almost certainly going to be the boring one. A phone with a custom chip and dual NPUs is not a Jony Ive concept piece. It’s a play to own the surface where ChatGPT lives for the next decade.

https://www.theverge.com/ai-artificial-intelligence/924063/openai-phone-rumors-2027-ming-chi-kuo

Raycast rebuilt itself from scratch

Raycast shipped a public beta of an entirely new Mac app on May 14, after months of silence. The team rewrote the foundation, built their own disk indexer instead of relying on Spotlight, and folded files, folders, and contacts into Root Search. AI Chat and Quick AI now share a composer and remember context across conversations. Skills installed locally on your Mac auto-load. Dictation is system-wide and free during the beta, alongside GPT-5.4 mini access.

CEO Thomas Paul Mann’s note explains the delay: they didn’t want a feature update, they wanted a launcher that could host the next decade of personal computing. Cloud Sync and Focus aren’t ported over yet. It’s the most considered AI-first relaunch since Notion did the same in late 2023, and the first one where the AI doesn’t feel like a bolt-on.

https://www.raycast.com/blog/the-new-raycast

xAI launched Grok Build

xAI shipped Grok Build on May 15, a fast and flicker-free CLI built around parallel subagents, plans, and skills. It’s in early beta for SuperGrok Heavy subscribers and ships as the formal answer to Claude Code, Codex CLI, and Gemini CLI. The marketing site shows worktree-style multi-agent execution with skills like /make-interfaces-feel-better, plus side-question commands that don’t break the active turn.

Pricing is hidden behind the SuperGrok Heavy paywall, which is xAI’s preferred way to land a developer product these days. The interesting move is the bundle: Grok 4.3 from two weeks ago plus a coding agent shell now ship as one purchase, which makes the unit economics very different from Anthropic’s Claude Code or OpenAI’s Codex.

Apple paid $250M for an AI Siri it never shipped

On May 5, Apple agreed to pay $250 million (around S$319M) to settle a US class action over the AI Siri features it advertised in late 2024 and quietly delayed. The settlement covers roughly 36 million iPhone 16, 15 Pro, and 15 Pro Max units sold between June 2024 and March 2025. Eligible buyers get $25 per device, up to $95 depending on the final claimant count.

Apple admits no wrongdoing, but the Better Business Bureau’s National Advertising Division had already concluded the company falsely told customers the new AI Siri was “available now.” A Morgan Stanley survey filed with the complaint had “enhanced Siri” as the single most anticipated feature on the iPhone 16.

The settlement money is small. The precedent is not. The courts now have a working definition of “falsely promoted AI capabilities” that covers ad campaigns whose actual product is two years out.

AlphaEvolve is designing the next TPUs

A year after DeepMind introduced AlphaEvolve, the team published a status report showing the agent has graduated from research demo to a core infrastructure tool. Jeff Dean confirmed AlphaEvolve proposed a circuit design “so counterintuitive yet efficient that it was integrated directly into the silicon of our next-generation TPUs.”

It also cut Google Spanner’s write amplification by 20%, found cache replacement policies in two days that previously took human teams months, reduced variant detection errors in PacBio genomics by 30%, and helped Klarna double the training speed of one of its largest transformer models. Terence Tao is publicly using it to attack Erdős problems and improve lower bounds on the Traveling Salesman Problem.

This is what “TPU brains designing TPU bodies” actually looks like in production, and it landed the same week as OpenAI’s phone rumour. Read both together and the AI hardware loop closes faster than most plans assumed.

https://deepmind.google/blog/alphaevolve-impact/

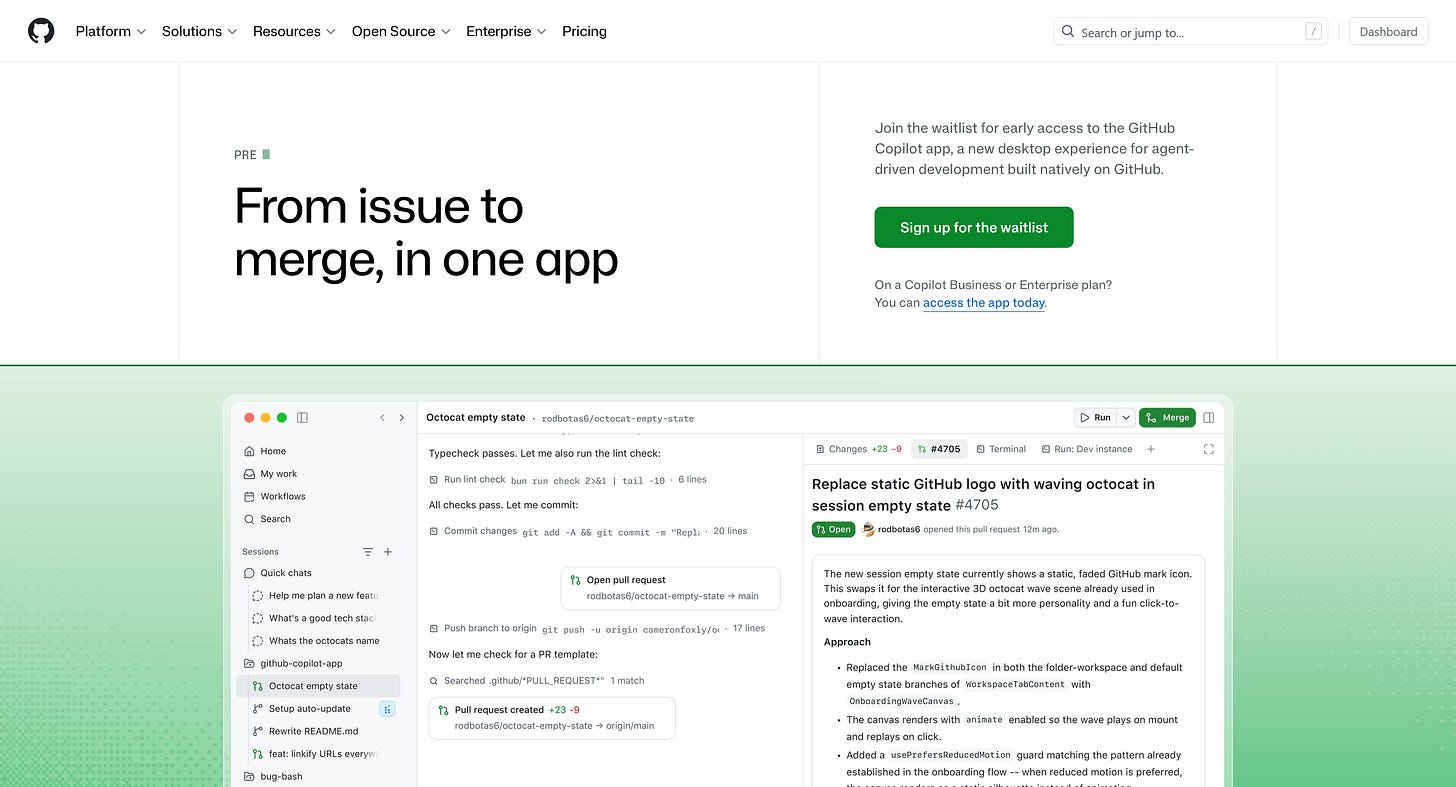

GitHub put Copilot in its own app

GitHub announced the Copilot app on May 15, a desktop client that wraps issue pickup, parallel agent sessions, diff review, and merge into a single surface. Business and Enterprise customers can use it today. Everyone else is on a waitlist. The marketing copy explicitly frames the product as “from issue to merge, in one app,” with agents extensible via MCP servers and custom skills.

Two weeks after announcing usage-based billing, GitHub is also building the surface that makes the meter run. The order matters. If your agents are going to bill you by the token, you want them in an app you control, not in someone else’s IDE.

https://github.com/features/preview/github-app

🕵️ Undercovered

Singapore’s Canvas got breached by ShinyHunters

On May 8, CSA confirmed it had reached out to Singapore institutions affected by a cyberattack on Instructure-owned Canvas. SIM, NUS, SUSS, NTUC LearningHub, and Kaplan all run on the platform. SIM publicly confirmed the breach hit its students and faculty.

Instructure detected unauthorised activity on April 29, then identified additional activity tied to the same actor on May 7, taking Canvas offline globally for “maintenance” while it contained the incident. Leaked data includes names, email addresses, student IDs, and messages between Canvas users. ShinyHunters, the same loose teenage extortion crew tied to the Ticketmaster hit, claimed responsibility.

The mainstream coverage framed this as a global outage. The Singapore angle is that an entire generation of local tertiary students just had their identity info land in an extortion crew’s dump, and nobody outside CNA covered it properly.

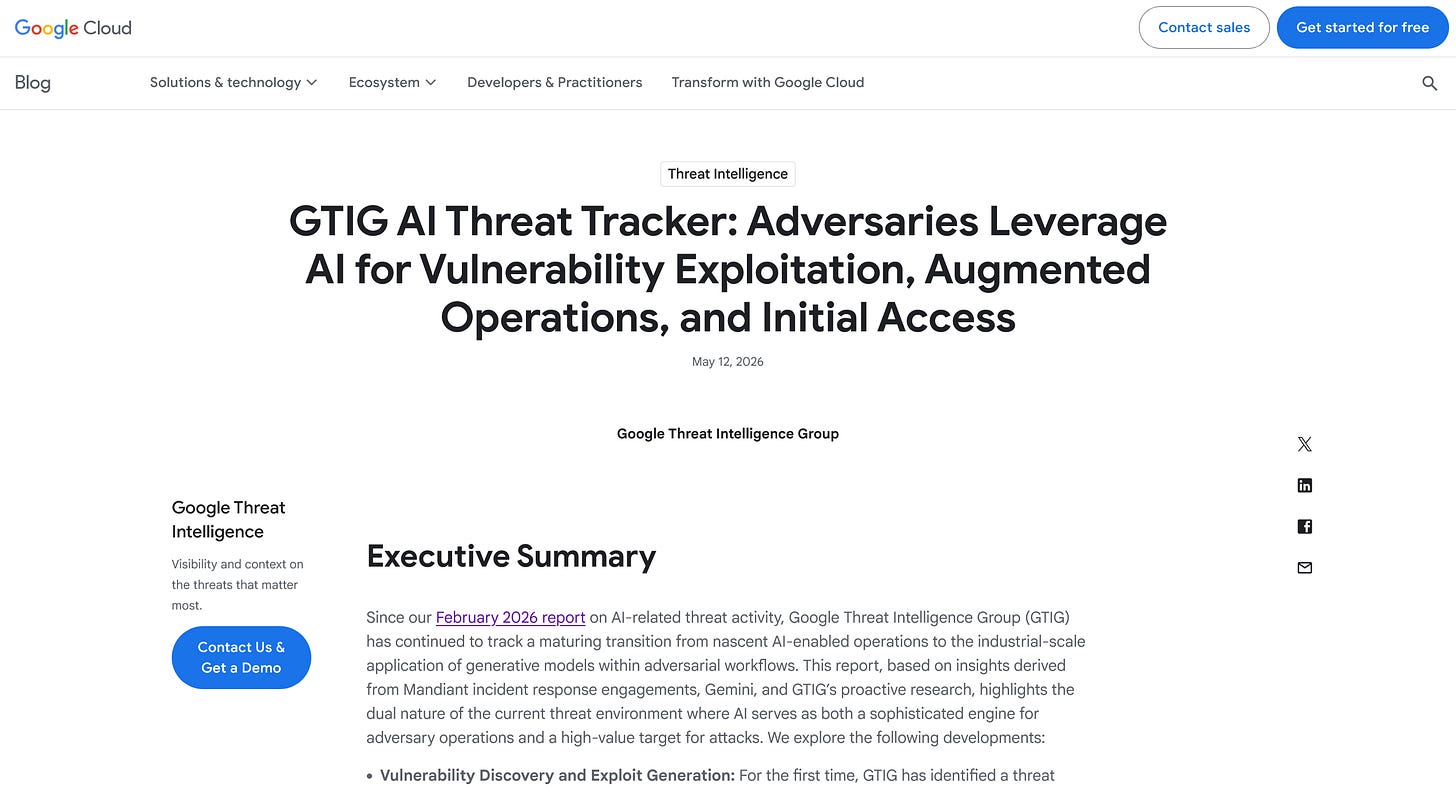

Google’s threat intel group caught the first AI-developed zero-day

On May 11, Google’s Threat Intelligence Group published its latest AI threat tracker and disclosed something new. A cyber crime group used what GTIG assesses is an AI-developed zero-day to bypass 2FA on a popular open-source web admin tool. GTIG worked with the vendor to patch it before the planned mass-exploit campaign launched.

The same report shows PRC- and DPRK-linked actors running Gemini through expert-persona jailbreaks and a Claude skill plugin called wooyun-legacy, which encodes 85,000 real Chinese bug-bounty vulnerabilities to prime the model for code analysis. APT45 is sending thousands of recursive prompts against CVE lists. PRC-nexus APT27 used Gemini to help build an ORB anonymisation network. PROMPTSPY, an Android backdoor, now embeds a hardcoded prompt that asks Gemini to navigate the device UI on the attacker’s behalf.

The honest framing: defenders no longer have the asymmetry. Attackers now write exploits with the same tools, and they’re running thousands of attempts where humans used to run dozens.

Lars Faye wrote the agentic-coding-is-a-trap essay everyone was thinking

Lars Faye’s May 12 essay is the clearest counter to the “human as orchestrator” framing that took over dev Twitter this year. He pulls together a recent Anthropic study (47% drop-off in debugging skills among heavy AI users), Simon Willison’s own admission that he no longer has a “firm mental model” of his apps, and Sandor Nyako of LinkedIn telling 50 engineers not to use coding agents for anything that requires critical thinking.

The thesis lands hard: “the use of coding agents is actively diminishing the very skills needed to effectively manage the coding agents.” Anthropic’s own paper calls this the paradox of supervision. The essay also names the vendor-lock-in risk in plain language, which most AI-positive devs avoid.

This is the post you’d send to a junior dev before they spend six months prompt-engineering their way out of being able to write a loop.

https://larsfaye.com/articles/agentic-coding-is-a-trap

Google’s new reCAPTCHA locks out de-Googled Android

Google updated its reCAPTCHA verification flow on April 22 at Cloud Next, and Android Authority caught the impact on May 7. Suspicious-looking traffic now hits a QR code challenge that requires Google Play Services 25.41.30 or higher to complete. If you’re on GrapheneOS, CalyxOS, or /e/OS, you fail the check.

The official workaround is the audio challenge, which the de-Googled phones are not optimised to surface either. Google’s framing is that hardware attestation is the only reliable defence against AI bots. The result is that the privacy-Android stack is now functionally locked out of any site that uses reCAPTCHA, which is most of them. The web just got a new tier system, and Google built the gate.

https://cybersecuritynews.com/google-recaptcha-update

🗄️ The Vault

Orca

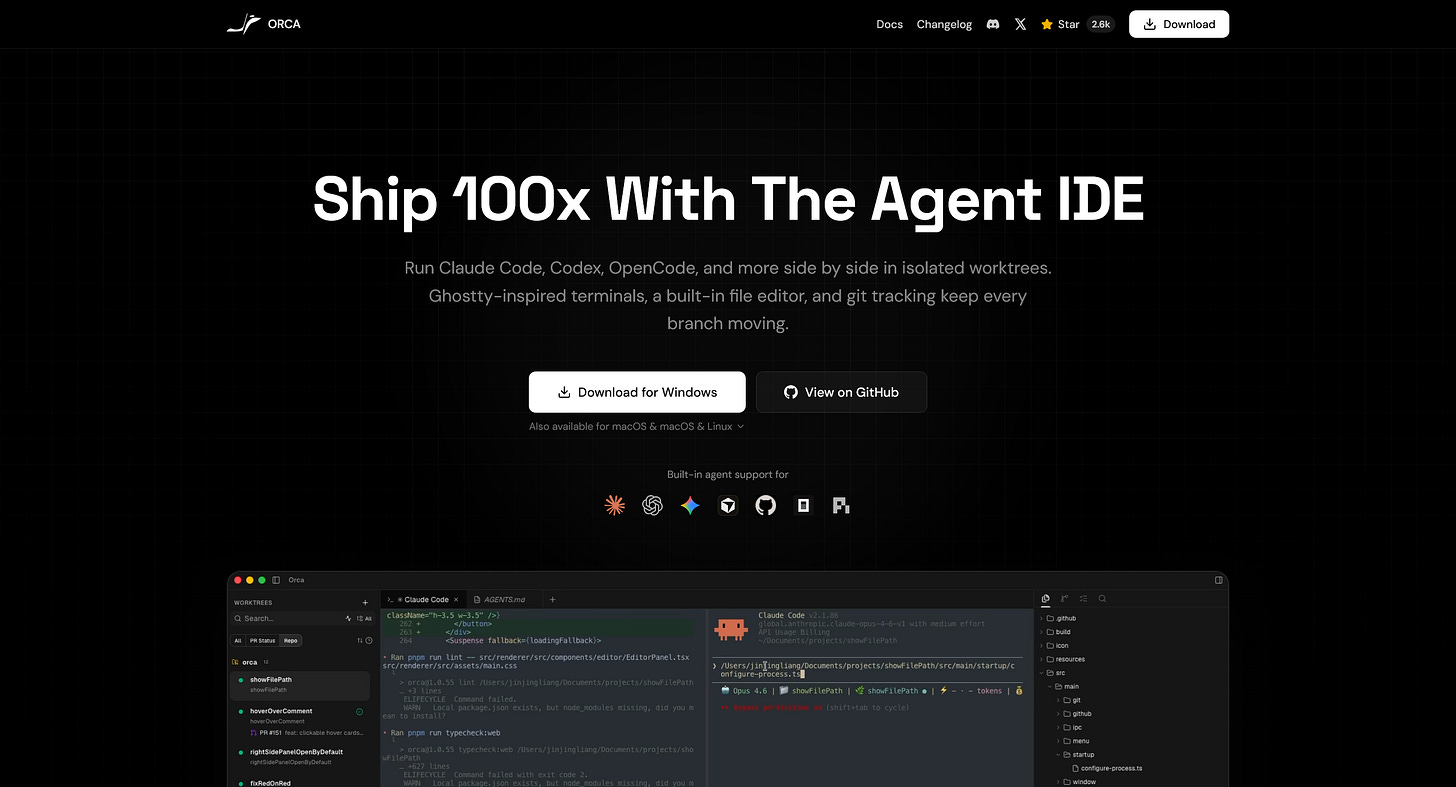

The next-gen IDE for working with a fleet of parallel coding agents. Bring your own Claude Code, Codex, Grok, OpenCode, or Gemini subscription. Every feature gets its own git worktree. Multi-agent terminals run in tabs and panes with at-a-glance status. Built-in diff review, GitHub integration, SSH worktrees, and a mobile companion app on iOS and Android. MIT-licensed, 2,557 stars, latest release v1.4.2-rc.10 shipped this morning. The most concrete answer yet to what the IDE looks like when you have five agents running at once.

https://github.com/stablyai/orca

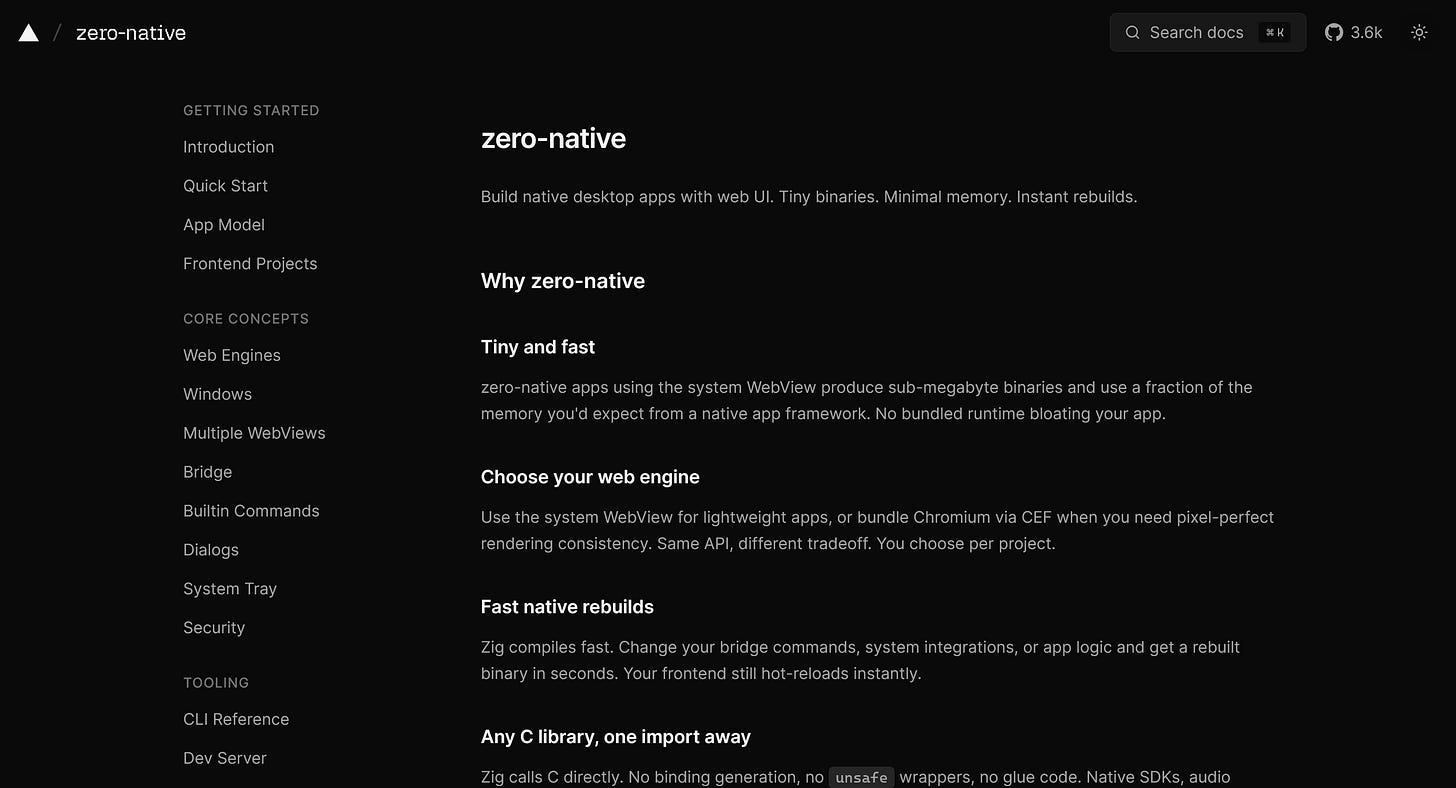

zero-native

Build native desktop apps with web UI in Zig. Sub-megabyte binaries using the system WebView, or bundle Chromium via CEF when you need pixel-perfect rendering. Hot-reload frontend, fast Zig rebuilds for the bridge layer. Calls any C library directly with no binding generation. macOS and Linux shells today, Windows and mobile in progress. The under-the-radar answer to Tauri’s complexity tax.

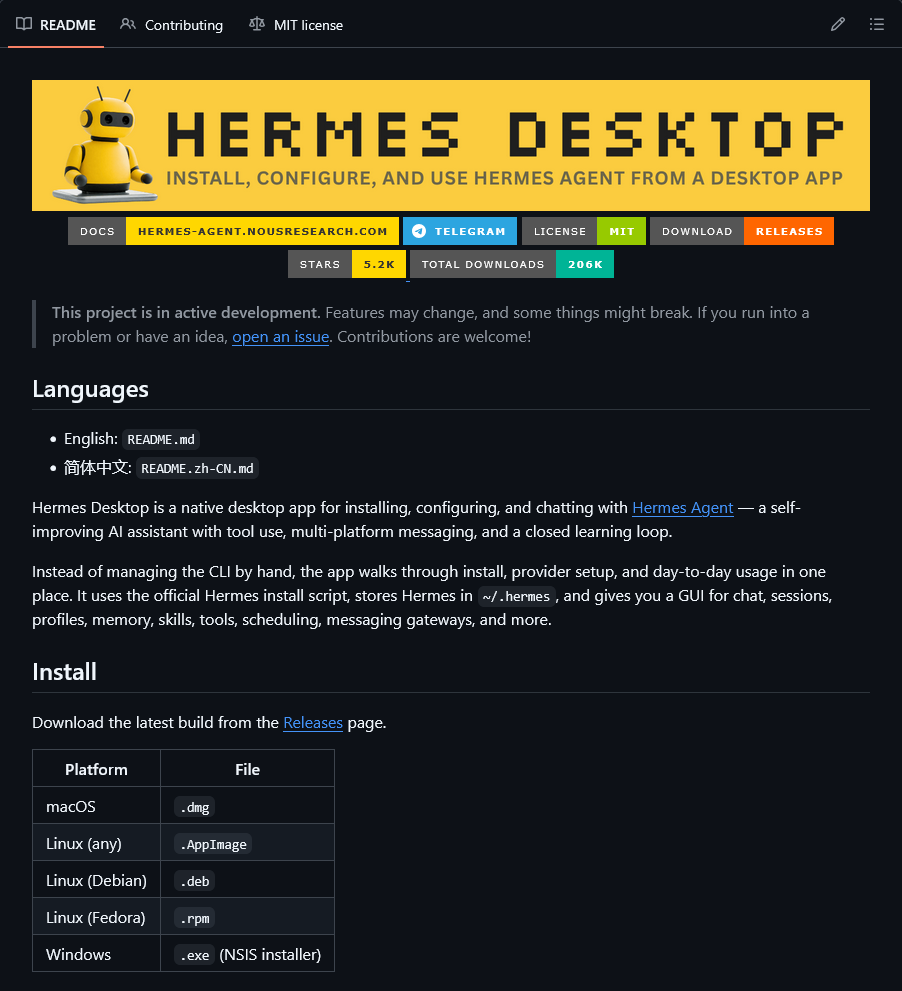

Hermes Desktop

A native macOS, Windows, and Linux companion app for Hermes Agent, the self-improving NousResearch agent with tool use, multi-platform messaging, and a closed learning loop. Walks you through install, provider setup, profile switching, and 22 slash commands. 14 toolsets covering web, browser, terminal, code execution, image gen, TTS, and memory. 16 messaging gateways including Telegram, Discord, Slack, WhatsApp, Signal, Matrix, and iMessage. MIT-licensed.

https://github.com/fathah/hermes-desktop

unlumen UI

A clean, command-palette-first React component library. Search-driven navigation, opinionated defaults, and the kind of restraint shadcn drifted away from in the last year. Useful when you want a starter library that doesn’t make every page look like the same template.

🔥 This Week’s Pick

Coding agents stopped being plugins

For most of 2025 the typical coding agent lived inside something else. Claude Code was a CLI on top of your terminal. Cursor was a fork of VS Code. Copilot was an extension. The defining trait of every shipping product was that it borrowed someone else’s surface to be useful.

This week three big launches broke that pattern at the same time.

GitHub announced the Copilot app, a dedicated desktop client that wraps the entire issue-to-merge loop. Agents run in parallel, scoped to repos, with MCP servers and custom skills as the extension model. It’s GitHub quietly admitting that the IDE plugin form factor doesn’t actually fit the way agents work.

xAI shipped Grok Build, a CLI built around parallel subagents, marketplace-style skills, and a plan viewer for architecting complex work. It’s the first xAI tool that exists primarily to let you build things instead of chat about them, and the first to ship as part of an existing subscription rather than as a metered API product.

Raycast quietly published the most considered launch of the three. The new Raycast isn’t an AI feature on top of a launcher. It’s a launcher rebuilt around AI. Quick AI and AI Chat share a composer. Skills installed locally are auto-discovered. A new disk indexer replaces Spotlight. System-wide dictation is free during the beta, which is the kind of subsidy you only pay when you’ve decided the next decade of personal computing happens inside your app.

Three different bets on form factor, all shipping the same week that Apple wrote a $250M cheque for the AI Siri that didn’t. The contrast is the point. The companies that move first on AI are the ones building the surface they think it lives in. The companies that move late are the ones writing settlements for the surfaces that aged out.

The takeaway for builders: the next twelve months are not going to be about which model is best. They’re going to be about whose app you happen to be inside when you decide to use it. Pick carefully.

🧪 This Week’s Experiments

Read Lars Faye’s “Agentic Coding is a Trap” and audit one task this week you’d normally hand to an agent: do it manually and notice what you’d have missed

Try Orca for one feature you’d normally split across multiple Claude Code worktrees, and see whether parallel-agent orchestration earns its complexity